Contracting Around Generative Legal Writing

In a recent post, I looked at how AI vendors use contracts to disclaim responsibility for their products. Today I want to flip the lens and look at how some of the consumers of legal text—law reviews—are using contract-like instruments to police the production of AI-generated work.

My interest in this was recently piqued by getting a contract to publish Lessons in Contract with the Georgetown Law Journal. It originally had a prohibition on AI use written into it, which was nearly absolute. About a week later, the Journal made material amendments, and it now is more calibrated in what it permits and forbids. The term, complete with footnotes, reads:

The Georgetown Law Journal is committed to keeping human-written work at the core of legal scholarship. The Author represents and warrants that

(i) the Author is the sole author of the Work;

(ii) the Work’s written text—its above-the-line content,1 below-the-line text,2and below-the-line citation parentheticals3 has not been written with assistance from generative artificial intelligence in a manner that would constitute plagiarism—including, but not limited to, copying and pasting from generative artificial intelligence;4

(iii) if the Author used research assistance from generative artificial intelligence, any content produced from the research was then verified by a human researcher or writer;

…

The Georgetown Law Journal understands that many modern legal research tools incorporate artificial intelligence and the Journal acknowledges the modern necessity of using these tools; however, the Author understands that the Journal retains the right to void this Agreement should the Work contain clear signs of unverified generative artificial intelligence usage that is inconsistent with Paragraph III.1—including, but not limited to, hallucinated cases or quotations

I thought this was striking, not least because many faculty I’ve chatted with this year used AI to generate their abstracts (at least), and—if they cut and pasted—would have violated it. And there’s been talk on bluesky and X suggesting that the total number of articles has increased as authors’ costs of production has plumetted.5 In a recent poll, Orin Kerr found that over 40% of his survey respondents disclosed that AI wrote at least part of their articles this last cycle.

I had the chance to talk with Georgetown’s EIC about the contract term, and her concerns about AI-generated research got me to wondering if this was a widespread move by journal editors against AI in legal scholarship, or something one-off. So I decided to find out.

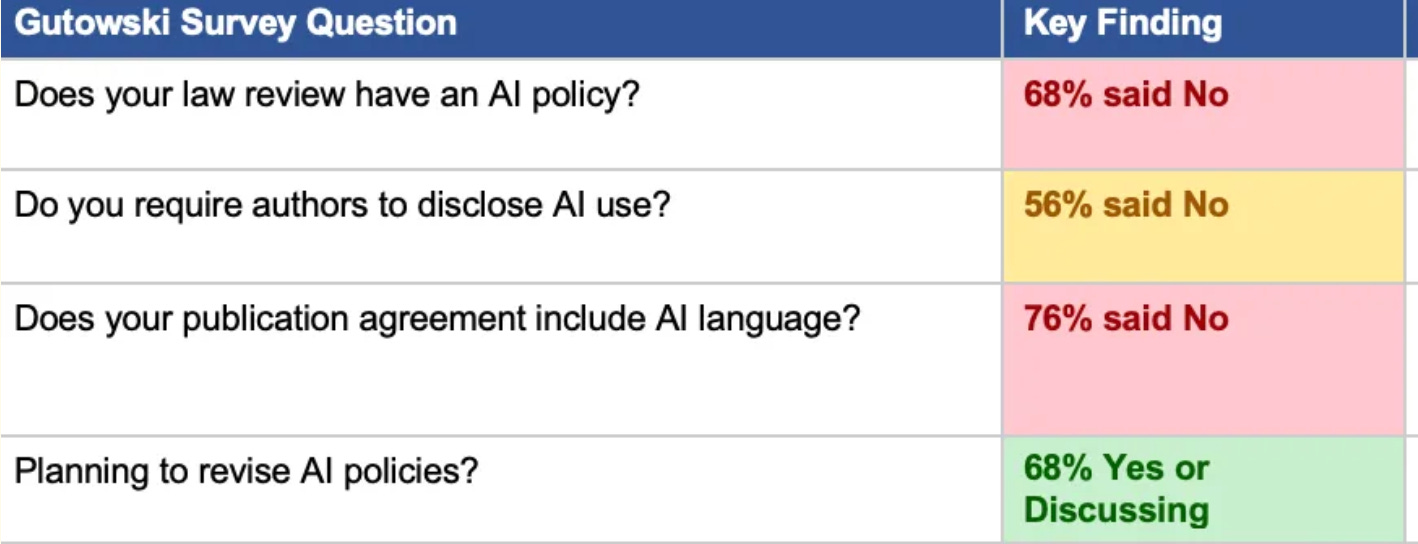

There’s prior art here, and a smattering of recent papers. In a survey of law review editors from last year, Nachman Gutowski found that the majority of law reviews have no AI policy whatsoever.

But, as Gutowski found, engaging in research about journals’ policies is challenging at scale. Only rarely do journals make their publishing agreements public. Their real policies about publication are similarly tough to track down — his survey promised anonymity to gather data.

I decided to update Gutowski’s findings. I tasked Claude Cowork to crawl every law journal found on Scholastica — roughly 580 sites — and collect (1) if a sample publication agreement was available; (2) if not, was there any form of publication guidelines; and (3) did it talk about AI. I did small sample check of the results, and it turned out to be a bit underinclusive — Claude, whatever it was told, tends to slack and didn’t always check the individual journal webpages (or tell me it couldn’t find them). So take what follows as a slightly conservative estimate of the phenomenon. If I used RAs, I’d have more confidence in the results. But they would’ve taken weeks to collect, not under an hour.

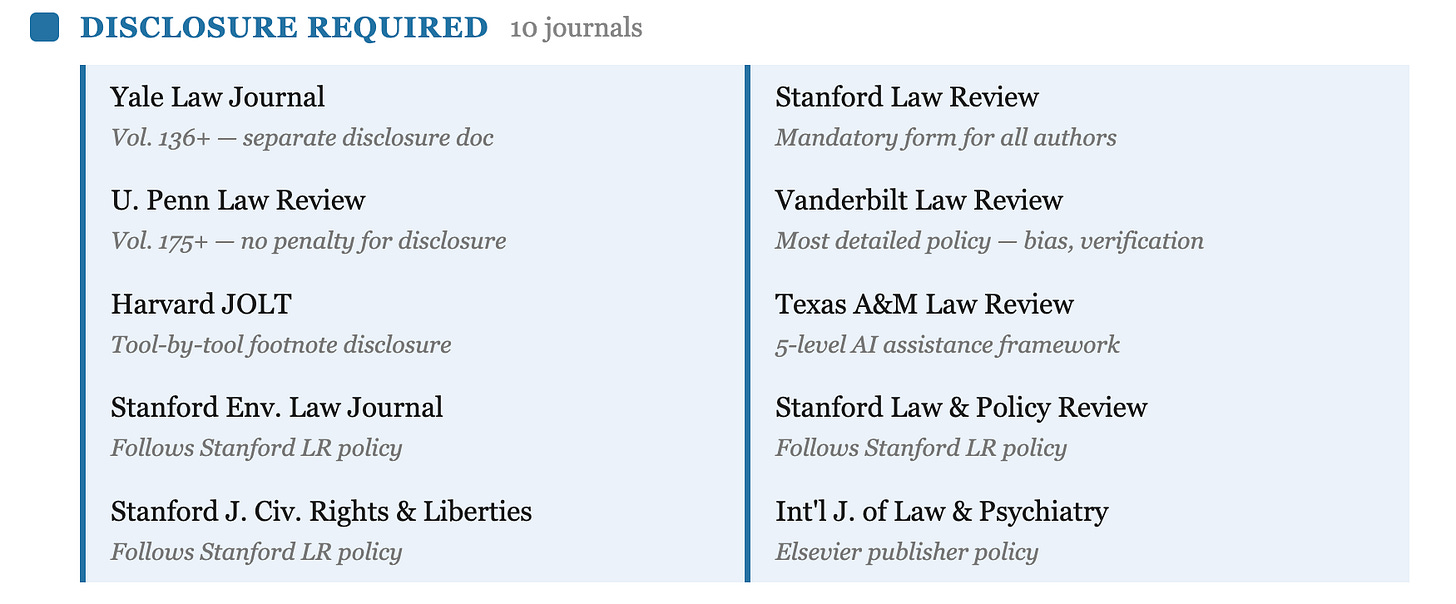

To start, only of the top 50 journals have an AI policy, roughly in line with Gutowski’s 16% in late 2024. But outside of that top echelon, disclosure of AI policies is quite rare. Only ~3% of the 587 journals identified had an AI policy that I could access through their public websites; only 8 in total had AI provisions in publicly available contracts. Of those with some kind of policy available, I found only 5 had a prohibition or a warranty against AI use of some form (like Georgetown), 10 required disclosure of AI use, 2 required acknowledgement of AI use, and 1 encouraged but did not require disclosure.

There really aren’t many anti-AI warranties at the moment: Georgetown (and the annual review it publishes), Virginia’s Journal of Law and Politics, Virginia’s Journal of International Law, Yale’s Journal of Law and the Humanities, and Mitchell Hamline Law Review.6

Compare that to the disclosure model. The Yale Law Journal, beginning with Volume 136, requires a brief statement on AI usage but notes that it “will not have a per se negative impact on the consideration of your piece.” (Per se: the Yalies can’t help themselves!) Yale also introduced something I haven’t seen elsewhere: an explicit warning about hallucinated sources, which it says will “strongly impact” the evaluation committees’ review, if they learn about them.

The Harvard Journal of Law & Technology goes further in its permissiveness: authors may use generative AI “at any stage in the writing process, so long as all such usage is disclosed.” The disclosure goes in the title page footnote—specifying the tool used and the function (drafting, copy-editing, research, idea generation). The Stanford Law Review requires disclosure of AI use that “has significantly affected the substance, originality, or reliability of the submission”—a threshold that introduces a judgment call authors must make for themselves. Penn’s Law Review requires authors to “disclose any use of artificial intelligence (AI) in the preparation of their submissions” starting with Volume 175. Like Yale, Penn promises that “disclosure of AI usage will not, in and of itself, weigh against consideration of their submissions.”

Penn asks that cover letters—which I thought were a dead letter and which I don’t even write these days—apparently must state:

Which AI tools, if any, were employed;

How these tools assisted in the research process; and

How these tools assisted in drafting or revising the manuscript.

Vanderbilt’s policy is the most granular among the top general reviews: authors must use AI “responsibly, ethically, and transparently,” verify AI-generated claims, guard against AI-introduced bias, and “independently produce all substantive aspects of their work.”

Other journals require some sort of AI acknowledgment: the Texas Law Review simply notes that “Authors are fully responsible for the content, sources, and integrity of their submissions, including when using AI”.

Contract or Guidelines?

What’s notable is not just the spectrum but the mechanism. Some of these rules live on submissions pages—essentially pre-contractual representations that may or may not be incorporated into the final publication agreement, and frankly might be more performative than real. Others, like Georgetown’s, are contractual warranties in the publication license itself.

The contract person in me says that this is a distinction with a difference: a disclosure statement on a website is guidance that’s not obviously enforceable; a warranty in an executed agreement could have teeth. Whether any law review would actually sue an author over a breached AI warranty, or rescind an offer of publication, is of course a separate question. (To be fair, I have recently heard a badly sourced rumor about someone having their offer rescinded when cite checking found a hallucinated citation.)

It’s notable that publication agreements are hard to access before you’ve been offered and accepted publication. Unlike submission guidelines, which are public, the actual contract you’ll sign typically doesn’t surface until you’ve turned down all your alternatives. Fortunately, Georgetown discloses that some kind of such term is coming in its submission guidelines. But nothing requires journals to be so explicit on the front end, and I’m sure that some aren’t.

What’s Changed Since the Gutowski Survey

Gutowski’s survey was fielded in approximately fall 2024. Since then, the disclosure consensus has hardened a bit. Every flagship general law review that has adopted a policy since Gutowski’s survey has chosen some form of disclosure—and none, apart from Georgetown, has moved toward stronger forms of prohibition.

I find the disclosure path unsurprising, but also misguided. Like Kevin Frazier and Alan Rozenshtein, who recently wrote on AI’s use in legal scholarship, I think the arguments for disclosing AI use really don’t work, except (as they say) to normalize AI use in a transitional moment. But it’s clear enough why the journals which have thought about this are pushing disclosure: it’s a cheap political solution to a problem that might feel exigent.

By way of comparison, look at courts’ standing orders

Law reviews aren't the only legal institutions reaching for contractual mechanisms to govern AI-generated text. Courts have been doing the same thing, often more aggressively. AI drafted briefs are always in the news. After Mata v. Avianca—where lawyers submitted ChatGPT-generated briefs stuffed with fabricated citations—judges across the country began issuing standing orders that function as quasi-contractual conditions of practice.

The approaches vary wildly. In the Northern District of Texas, Judge Brantley Starr was first to require attorneys to certify either that no generative AI was used or that any AI-generated content was verified using traditional research tools. In Florida, the Eleventh and Seventeenth Judicial Circuits mandate disclosure on the face of the filing plus a certification that all citations were independently verified. The Eastern District of Pennsylvania has Judge Baylson’s standing order requiring affirmative disclosure plus citation verification. In New York, individual judges have their own rules—some require disclosure, others require certification, still others just remind you that Rule 11 exists.

These aren’t contracts in any real sense, but they operate like conditions of access. File in my courtroom, and you agree to these terms. The certification requirement in particular looks a lot like a warranty: by signing the filing, you’re representing that the text meets certain conditions regarding its provenance. Breach that representation, and you face sanctions—which, unlike the law review context, courts actually impose.

The Fifth Circuit, by contrast, considered and rejected a proposed AI disclosure rule. The court’s statement was terse: lawyers are already responsible for the accuracy of their filings, and “‘I used AI’ will not be an excuse for an otherwise sanctionable offense.” The Fifth Circuit’s view is essentially that existing rules—Rule 11’s certification requirement, duties of candor to the tribunal—already create the relevant obligations, and AI-specific mandates are redundant.

Private Ordering All the Way Down

What connects the anti-AI warranties, the Florida certification, and the Fifth Circuit's shrug is that they're all attempts at the same thing. If you squint, all of these mechanisms—publication warranties, standing orders, certification requirements—are instances of private or quasi-private ordering of legal knowledge workers. And that what end?

The variety here is striking. Mitchell Hamline has a broad prohition:

The Law Review does not accept submissions that have been generated, in whole or in part, by artificial intelligence (“AI”) and reserves the right to cancel publication of a submission if it determines the piece contains AI-generated content.

Stanford asks authors to assess whether their AI use “significantly affected” their submission. The Fifth Circuit says existing rules are sufficient. A Florida circuit court says you have to disclose on the face of the filing. The Texas A&M Journal of Property Law publishes a whole AI-assisted volume and proposed a five-level taxonomy. And the boundary question—when does a grammar tool become generative AI, when does a search suggestion become an AI-assisted draft—remains unresolved everywhere.

What’s going on here? My sense isn’t entirely captured by the cynical view, which you see in responses to NY’s draft bill to deter AI legal advice, which holds that legal institutions are trying to grasp for tools to build the walls around our business a bit higher.

I think it’s something more Butlerian — an aesthetic preference against AI text, perhaps rooted in a conviction that the struggle to articulate ideas in prose is itself part of what makes scholarship valuable. Note that Georgetown puts “human-written work” at the core of its policy. Not “accurate work” or “original work” or even (in the words of every deputy dean ever) “impactful work”— but human-written work. That reads to me as a claim that legal scholarship is an enterprise whose value depends in part a person sitting with a problem and working through it in language. We literally respect and value the craft, not the product.

There’s something to this. The conventional defense of writing-as-thinking — that you don’t really understand an argument until you’ve tried to put it in your own words — has gravity. Anyone who has used Claude to draft a section and then realized that the fluent paragraphs it produced papered over a gap in their reasoning knows the feeling. (Heck, I absolutely hated the first version of what Claude suggested here, so I wrote it differently, perhaps better, and definitely with more typos.) If scholarship is supposed to be a record of thought, not just a vehicle for conclusions, then maybe the process matters in ways that survive the observation that the output looks the same either way.

But I don’t ultimately share the Butlerian instinct, for the same reason I don’t think the hand-copying of manuscripts was essential to medieval scholarship even though monks surely thought it was, and it produced artifacts of exceptional beauty. Tools change what authorship looks like without necessarily changing what it’s for. The question isn’t whether AI touches the pen, but if a human mind is doing the intellectual work that the text purports to represent.7

Georgetown’s warranty, read carefully, actually gets at this: what it prohibits isn’t AI assistance but AI authorship, and it carves out research tools explicitly. That’s a defensible line. The problem—as Frazier and Rozenshtein aptly observe— is that it’s a line that will increasingly harder to draw, as the tools get better and the boundary between “research assistance” and “drafting assistance” dissolves into a spectrum that no contractual definition can cleanly capture. Or at least none can do so when journals’ enforcement tools are likely so poorly thought through.

I genuinely don’t know what equilibrium looks like here. Maybe disclosure norms will settle in as the default, as Nayar and Cooper argue here, the way conflict-of-interest disclosures did in other professional contexts. Maybe the prohibition model will prove unenforceable as AI becomes indistinguishable from the word processors and research platforms everyone already uses. Maybe the Fifth Circuit’s view—existing rules are enough, stop inventing new ones—will win out. Or maybe the Georgetown model—contractual warranty—will gain traction among editors and courts that see themselves as guardians of a distinctly human scholarly enterprise.

What I can say is that this contract-first regulatory regime looks alot like how legal institutions have responded to other technological changes. Term by term we lawyers can’t help ourselves but to use contracts to govern social problems, which leads to a diverse set of competing privately-ordered solutions, and, sometimes, plenty of confusion about what is and isn’t permitted.

But that's the macro view. For now, my genuine advice to risk-averse authors is to read the fine print before having Claude polish your drafts.

“‘Above-the-line content’ refers to all content that appears above the citation division on the page—including, but not limited to, textual sentences, visual diagrams, and charts.”

“Below-the-line text” refers to all textual sentences within footnotes and excludes citation sentences.

“Below-the-line citation parentheticals” refers to all explanatory phrases within parentheses at the end of a legal citation.

The Georgetown Law Journal does not consider spell check or grammar check to be generative artificial intelligence. Brainstorming with the assistance of generative artificial intelligence is not prohibited.

I am skeptical this is real.

The clustering of politices at the University level suggests that GCs are likely to be playing an underappreciated role.

It’s unclear what Dune’s Butlerian jihadists would actually think of LLMs as text generators. “Thou shalt not make a machine in the likeness of a human mind” doesn’t mean you can’t have it draft ad copy. And sequels to the extent they bear on original meaning, absolutely read like they were drafted by an early version ChatBot.